One web extension to rule them all

How to use the Platypush web extension to customize your browser and connect things together.

Once upon a time, there was a worldwide web where web extensions were still new toys to play with and the major browsers that supported them (namely Firefox and Chrome) didn’t mind providing them with very wide access to their internals and APIs to do (more or less) whatever they pleased. The idea was that these browser add-ons/apps/extensions (the lines between these were still quite blurry at the time) could become a powerful way to run within a browser (even locally and without connecting to another website) any piece of software the user wanted to run.

It was an age when powerful extensions spawned that could also deeply change many things in the browser (like the now-defunct Vimperator that could completely redesign the UI of the browser to make it look and behave like vim), and user scripts were a powerful way users could leverage to run anything they liked wherever they liked. I used to use Vimperator custom scripts a lot to map whichever sequence of keys I wanted to whichever custom action I wanted — just modeled as plain JavaScript. And I used to use user scripts a lot, as well — those still exist, but with many more limitations than before.

That age of wild West of web extensions and apps is largely gone by now. It didn’t take long before malicious actors realized that the freedom given to web extensions made them a perfect vector to run malware/spyware directly within the browser that, in many cases, could bypass several anti-malware layers. And that generation of web extensions had another issue with fragmentation. Firefox and Chrome had developed their own APIs (like Mozilla’s XUL and Chrome Apps) that didn’t have much overlap. That made the task of developing a web extension that targeted multiple browsers a very expensive experience, and many extensions and apps were only available for a particular browser.

The case for greater security, separation of concerns, and less fragmentation drove the migration towards the modern WebExtension API. Around the end of 2017, both Mozilla and Google ended the support for the previous APIs in the respective browsers. They also added more restrictions for the add-ons and scripts not approved on their stores ( recent versions of Firefox only allow you to permanently install extensions published on the store) and added more constraints and checks in their review processes.

The new API has made it harder for malicious actors to hack a user through the browser, and it also has greatly reduced the barriers required to develop a cross-browser extension. On the other hand, however, it has also greatly reduced the degrees of freedom offered to extensions. Several extensions that required deep integration with the browser (like Vimperator and Postman) decided to either migrate to stand-alone apps or just abandon their efforts. And user scripts have become more niche geeky features with more limitations than before offered by third-party extensions like Greasemonkey/Tampermonkey. Firefox’s recent user-scripts API is a promising alternative for reviving the power of the past wave, but so far it’s only supported by Firefox.

As a power user, while I understand all the motivations that led browser developers to the decision of more fencing/sandboxing for the extensions, I still miss those times when we could deeply customize our browser and what it could do however we liked it. I built Platypush over the years to solve my need for endless extensibility and customization on the backend side, with everything provided by a uniform and coherent API and platform. I thought that applying the same philosophy to the context of my web browser would have been the natural next step. With the Platypush web extension, I’ve tried to build a solution for several needs faced by many power users.

First, we’ve got several backend solutions to run things around, and smart home devices to do things and pass information around. But the dear ol’ desktop web browser has often been left behind in this progress in automation, even if many people still spend a lot of time on the web through desktop devices. Most of the front-end solutions for cloud/home automation come through mobile apps. Some of the solutions for automation provide a web app/panel (and Platypush does it as well), but the web panel is receiving less and less attention in an increasingly mobile-centric world.

And even when your solution provides a web app, there’s another crucial factor to take into account: the time to action. How much time passes between you thinking “I’d like to run this action on that device” and the action actually being executed on that device? And remember that, especially when it comes to smart devices, the time-to-action in the “smart” way (like you toggling a light-bulb remotely) should never be longer than the time-to-action in the “dumb” way (like you standing up and toggling a switch). That’s your baseline.

When I’m doing some work on my laptop I may sometimes want to run some action on another device — like send a link to my phone, turn on the lights or the fan, play the video currently playing on the laptop on my media center, play the Spotify playlist playing in my bedroom in my living room — or the other way around — and so on. Sure, for some of these problems there’s a Platypush/HomeAssistant/OpenHAB/BigCorp Inc. front-end solution, but that usually involves either you getting the hands off your laptop to grab your phone, or opening/switching to the tab with the web app provided by your platform, searching for the right menu/option, scrolling a bit, and then running the action. Voice assistants are another option (and Platypush provides integrations that give you access to many of the voice technologies around), but talking your way through the day to run anything isn’t yet the frictionless and fast process many want — nor it should be the only way. Minimizing the time-to-action for me means to be able to run that action on the fly (ideally within a maximum of three clicks or keystrokes) from any tab or from the toolbar itself, regardless of the action.

Sure, there are some web extensions to solve some of those problems. But that usually involves:

-

Relying on someone else’s solution for your problem, and that solution isn’t necessarily the most optimal for your use case.

-

Polluting your browser with lots of extensions in order to execute different types of actions. Sending links to other devices may involve installing the Pushbullet/Join extension, playing media on Kodi another extension, playing media on the Chromecast another extension, saving links to Instapaper/Evernote/Pocket or other extensions, sharing on Twitter/Facebook yet more extensions, controlling your smart home hub yet another extension… and the list goes on, until your browser’s toolbar is packed with icons, and you can’t even recall what some of them do — defeating the whole purpose of optimizing the time-to-action from the context of the web browser.

-

And, of course, installing too many extensions increases the potential area of surface for attacks against your browser — and that’s the problem that the WebExtensions API was supposed to solve in the first place.

I first started this journey by building a simple web extension that I could use to quickly debug Platypush commands executed on other RaspberryPis and smart devices around my house over web API/websocket/MQTT. Then, I realized that I could use the same solution to solve my problem of optimizing the time-to-action — i.e. the problem of “I want to switch on the lights right now without either grabbing my phone or switching tabs or standing up, while I’m working on my Medium article on the laptop.” And that means either from the toolbar itself (preferably with all the actions grouped under the same extension button and UI) or through the right-click context menu, like a native browser action. The ability to run any Platypush action from my browser on any remote device meant that I could control any device or remote API from the same interface, as long as there is a Platypush plugin to interact with that device/API.

But that target wasn’t enough for me yet. Not all the actions that I may want to run on the fly from whichever location

in the browser could be translated to an atomic Platypush action. Platypush remote procedures can surely help with

running more complex logic on the backend, but I wanted the extension to also cover my use cases that require

interaction with the browser context — things like “play this video on my Chromecast (yes, even if I’m on Firefox)”,

“translate this page and make sure that the result doesn’t look like a 1997 website (yes, even if I’m on Firefox)”,

“download this Magnet link directly on my NAS”, and so on. All the way up to custom event hooks that could react to

Platypush events triggered by other devices with custom logic running in the browser — things like “synchronize the

clipboard on the laptop if another Platypush device sends

a ClipboardEvent”, “send a notification

to the browser with the spoken text when the Google Assistant plugin triggers

a ResponseEvent”

, or when a sensor goes above a certain threshold, and so on.

I wanted the ability to define all of these actions through a JavaScript native API similar to that provided by Greasemonkey/Tampermonkey. But while most of the user scripts provided by those extensions only run within the context of a web page, I wanted to decouple my script snippets from the web page and build an API that provides access to both the browser context, to the Platypush actions available on any other remote device, to run background code in response to custom events, and to synchronize the configuration easily across devices. So let’s briefly go through the extension to see what you can do with it.

Installation and usage

First, you need a Platypush service running somewhere. If you haven’t tried it before, refer to any of the links in the

previous sections to get started (I’ve made sure that installing, configuring, and starting a base environment doesn’t

take longer than five minutes, I promise :) ). Also, make sure that you enable

the HTTP backend in the config.yaml, as the

webserver is the channel used by the extension to communicate with the server. Once you have a Platypush instance

running on e.g. a RaspberryPi, another server or your laptop, get the web extension:

You can also build an extension from sources. First, make sure that you have npm installed, then clone the repo:

git clone https://git.platypush.tech/platypush/platypush-webext

Install the dependencies and build the extension:

npm install

npm run build

At the end of the process, you should have a dist folder with a manifest.json.

-

In Chrome (or any Chromium-based browser), go to Extensions -> Load Unpacked and select the dist folder.

-

In Firefox, go to

about:debugging-> This Firefox -> Load Temporary Add-on and select themanifest.jsonfile.

Note that recent versions of

Firefox only support unpacked

extensions (i.e. any extension not loaded on the Firefox add-ons website) through about:debugging. This means that any

temporary extension will be lost when the browser is restarted — however, restoring the configuration of the Platypush

extension when it’s reinstalled is a very quick process.

Once installed in the browser, the extension icon will appear in the toolbar.

Click on the available link to open the extension configuration tab and add your Platypush device in the configuration.

Once the device is added, click on its name from the menu and select Run Action.

The run tab comes with two modes: request and script mode. In request mode, you can run actions directly on a remote Platypush device through a dynamic interface. You’ve got a form with an autocomplete menu that displays all the actions available on your device, and upon selection, the form is pre-populated with all the arguments available for that action, their default values, and description. This interface is very similar to the execute tab provided by the Platypush web panel, and it makes it super easy to quickly test and run commands on another host.

You can use this interface to run any action on any remote device as long as there’s a plugin installed and configured

for it — file system management, media center controls, voice assistants, cameras, switches, getting data from sensors,

managing cloud services, you name it. You can also run procedures stored on the remote device — their action names start

with procedure — and you can also pass the URL in the active tab to action as an argument by using the special

variable $URL$ as an action value. For instance, you can use it to create an action that sends the current URL to your

mobile device

through pushbullet.send_note,

with both body and url set to $URL$. Once you’re happy with your action, you can save it so it’s available both

from the toolbar and the browser context menu.

You can also associate keybindings to your actions, so you can run them in your browser from any tab with just a few

keystrokes. The mappings are in the form <Ctrl><Alt><n>, with n between 0 and 9 - however, Chrome-based

browsers limit the number of keybindings per extension to a maximum of 4, for some odd reason that I completely ignore.

If you only needed a way to execute Platypush actions remotely from your browser, this is actually all you need. The action will now be available from the extension toolbar:

And from the context menu:

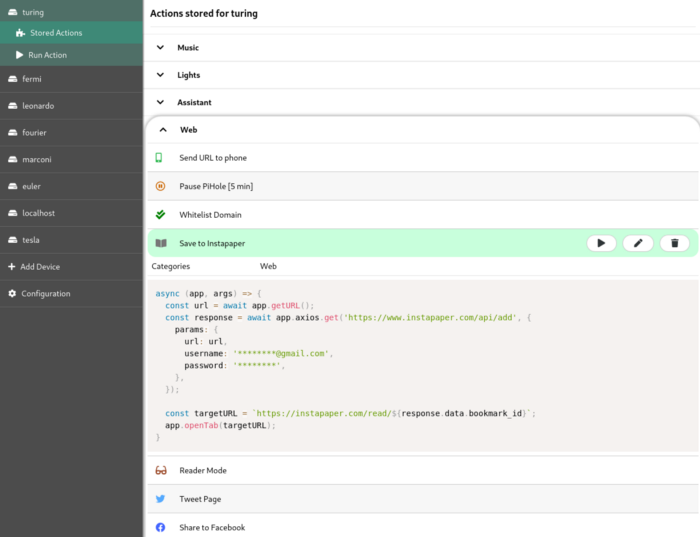

You can easily debug/edit stored action from the Stored Action tab in the extension’s configuration page.

Script mode

The other (and most powerful) way to define custom actions is through scripts. Scripts can be used to glue together the Platypush API (or any other API) and the browser API.

Select Script from the selector on the top of the Run Action tab. You will be presented with a JavaScript editor with a pre-loaded script template:

The page also provides a link to a Gist showing examples for all the available pieces of the API. In a nutshell, these are the most important pieces you can use to build your user scripts:

-

argsincludes relevant context information for your scripts, such as the target Platypushhost, thetabId, and thetargetelement, if the action was called from a context menu on a page. -

appexposes the API available to the script.

Among the methods exposed by app:

app.getURLreturns the URL in the active tab.app.setURLchanges the URL rendered in the active tab, whileapp.openTabopens a URL in a new tab.app.notify(message, title)displays a browser notification.app.runexecutes actions on a remote Platypush device.

For example, this is a possible action to cast YouTube videos to the default Chromecast device:

// Platypush user script to play the current URL

// on the Chromecast if it is a YouTube URL.

async (app, args) => {

const url = await app.getURL();

if (!url.startsWith('https://www.youtube.com/watch?v=')) {

return;

}

const response = await app.run({

action: 'media.chromecast.play',

args: {

resource: url,

},

}, args.host);

if (response.success) {

app.notify('YouTube video now playing on Chromecast');

}

}

app.axios.[get|post|put|delete|patch|head|options]: The API also exposes the Axios API to perform custom AJAX calls to remote endpoints. For example, if you want to save the current URL to your Instapaper account:

// Sample Platypush user script to save the current URL to Instapaper

async (app, args) => {

const url = await app.getURL();

const response = await app.axios.get('https://www.instapaper.com/api/add', {

params: {

url: url,

username: '********@****.***',

password: '******',

},

});

const targetURL = `https://instapaper.com/read/${response.data.bookmark_id}`;

app.openTab(targetURL);

}

app.getDOMreturns the DOM/content of the current page (as a Node element), whileapp.setDOMreplaces the DOM/content of the page (given as a string). For example, you can combine the provided DOM API with the Platypush Translate plugin to translate a web page on the fly:

// Platypush user script to translate a web page through the Google Translate API

async (app, args) => {

const dom = await app.getDOM();

// Translate the page through the Platypush Google Translate plugin

// (https://docs.platypush.tech/en/latest/platypush/plugins/google.translate.html).

// The plugin also splits the HTML in multiple requests if too long

// to circumvent Google's limit on maximum input text.

const response = await app.run({

action: 'google.translate.translate',

args: {

text: dom.body.innerHTML,

format: 'html',

target_language: 'en',

}

}, args.host);

// The new body will contain a <div> with the translated HTML,

// a hidden <div> with the original HTML and a top fixed button

// to switch back to the original page.

const translatedDiv = `

<div class="platypush__translated-container">

<div class="platypush__translated-banner">

<button type="button"

onclick="document.body.innerHTML = document.querySelector('.platypush__original-body').innerHTML">

See Original

</button>

</div>

<div class="platypush__translated-body">

${response.translated_text}

</div>

<div class="platypush__original-body">

${dom.body.innerHTML}

</div>

</div>`;

const style = `

<style>

.platypush__original-body {

display: none;

}

.platypush__translated-banner {

position: fixed;

background: white;

color: black;

z-index: 99999;

}

</style>`;

// Reconstruct the DOM and change it.

dom.head.innerHTML += style;

dom.body.innerHTML = translatedDiv;

await app.setDOM(`<html>${dom.getElementsByTagName('html')[0].innerHTML}</html>`);

}

- The extension API also exposes the Mercury Reader API to simplify/distill the content of a web page. You can combine the elements seen so far into a script that simplifies the content of a web page for better readability or to make it more printer-friendly:

// Platypush sample user script to simplify/distill the content of a web page

async (app, args) => {

const url = await app.getURL();

// Get and parse the page body through the Mercury API

const dom = await app.getDOM();

const html = dom.body.innerHTML;

const response = await app.mercury.parse(url, html);

// Define a new DOM that contains the simplified body as well as

// the original body as a hidden <div>, and provide a top fixed

// button to switch back to the original content.

const style = `

<style>

.platypush__simplified-body {

max-width: 30em;

margin: 0 auto;

font-family: Helvetica, Arial, sans-serif;

font-size: 20px;

color: #333;

}

.platypush__simplified-body h1 {

border-bottom: 1px solid black;

margin-bottom: 1em;

padding-top: 1em;

}

.platypush__original-body {

display: none;

}

.platypush__simplified-banner {

position: fixed;

top: 0.5em;

left: 0.5em;

background: white;

color: black;

z-index: 99999;

}

</style>`;

const simplifiedDiv = `

<div class="platypush__simplified-container">

<div class="platypush__simplified-banner">

<a href="#"

onclick="document.body.innerHTML = document.querySelector('.platypush__original-body').innerHTML; return false;">

See Original

</a>

</div>

<div class="platypush__simplified-body">

<h1>${response.title}</h1>

${response.content}

</div>

<div class="platypush__original-body">

${dom.body.innerHTML}

</div>

</div>`;

// Construct and replace the DOM

dom.head.innerHTML += style;

dom.body.innerHTML = simplifiedDiv;

await app.setDOM(`<html>${dom.getElementsByTagName('html')[0].innerHTML}</html>`);

}

- Finally, you can access the

targetelement if you run the action through a context menu (for example, right-click on an item on the page). Because of WebExtensions API limitations (which can only pass JSON-serializable objects around), thetargetelement is passed on the args as a string, but you can easily convert it to a DOM object (and you can convert any HTML to DOM) through theapp.HTML2DOMmethod. For example, you can extend the initial YouTube to Chromecast user script to cast any audio or video item present on a page:

// Sample Platypush user script to cast the current tab or any media item selected

// on the page to the default Chromecast device configured in Platypush.

async (app, args) => {

const baseURL = await app.getURL();

// Default URL to cast: current page URL

let url = baseURL;

if (args.target) {

// The user executed the action from a context menu

const target = app.HTML2DOM(args.target);

// If it's a <video> or <audio> HTML element then it will have a <source> tag

const src = target.querySelector('source');

// Otherwise, check if it's a link

const link = target.tagName.toLowerCase() === 'a' ? target : target.querySelector('a');

if (src) {

url = src.attributes.src.value;

} else if (link) {

url = link.attributes.href.value;

}

}

// Resolve relative URLs

if (!url.match('^https?://[^/]+') && !url.startsWith('magnet:?')) {

url = baseURL.match('(^https?://[^/]+)')[1] + (url || '');

}

// Finally, cast the media

await app.run({

action: 'media.chromecast.play',

args: {

resource: url,

}

}, args.host);

}

With these basic blocks, you should be able to create any custom browser actions that you want. Some examples:

-

Convert the current web page to PDF through the Platypush

webpage.simplifyplugin and deliver it to your Kindle as an attachment through the Platypush GMail plugin. -

Send an email to someone containing the text selected on a page.

-

Translate on the fly some text selected on a page.

-

Share the current link to Twitter/Facebook/LinkedIn (and ditch away all the other share-to-social extensions).

-

Download magnet/torrent links on a page directly to your NAS.

Configuration Backup and Restore

Finally, you can easily edit, back up, and restore the extension configuration through the Configuration tab. The configuration can either be loaded/copied to a file, restored from/backed up remotely to a Platypush device (look ma’, no cloud!), or loaded from a URL.

Work in Progress

The extension is still under development, and I’m open to suggestions, tickets, and pull requests on the repository page. Two features, in particular, are next on my roadmap:

Integration with the Platypush WebSocket protocol

That would allow many interesting features, such as checking the health status of the remote devices, transferring larger chunks of data (like the audio/video stream from the remote device) and, most of all, setting up event hooks — scripts that run automatically when a Platypush device triggers an event like e.g. voice assistant response processed, media status changed, lights scene changed, new sensor data is received, etc.

Support for third-party libraries through the API

As of now, the scripts API exposes the axios and mercury parser libraries, but I believe that for sake of

flexibility, it should be possible to import external libraries in the user scripts through something as simple as:

const jquery = app.loadScript('https://some.cdn/jquery.min.js');

Eventually, if the project gets enough traction, I’d love to create a repository where users could share their creations — as long as we all keep in mind that with great power comes great responsibility :)

Conclusions

I understand the security and uniformity concerns that led to the adoption of the WebExtensions API. But I also understand why many extensions that used to rely on deeper integrations with the browser have refused to compromise with the new API and have been discontinued in the meantime.

I struggled a lot myself developing this extension with the new API, as the current WebExtensions API creates many sandboxes and it only makes pieces of information accessible to a particular context (like the background script, the content script, or the popup context), and it forces developers that need information and features to be accessed by multiple parts of their application to set up complex messaging systems to pass data around. It also puts hard constraints on what pieces of code can be executed where (good luck trying to get away with an evalin your code). With the Platypush web extension, I have tried to fill the void left by the deprecation of the previous extensions/apps/add-ons APIs and to provide a layer for power users to deeply customize the behavior of their browsers. I also wanted to build an extension that could give me easy access, from the same UI and the same button, to the increasingly fragmented world of smart devices around me. And I had also grown tired of seeing the space on my toolbar being eaten up by tons of icons of extensions that could only perform one specific task!

Reactions

How to interact with this page

Webmentions

To interact via Webmentions, send an activity that references this URL from a platform that supports Webmentions, such as Lemmy, WordPress with Webmention plugins, or any IndieWeb-compatible site.

ActivityPub

- Follow @blog@platypush.tech on your ActivityPub platform (e.g. Mastodon, Misskey, Pleroma, Lemmy).

- Mention @blog@platypush.tech in a post to feature on the Guestbook.

- Search for this URL on your instance to find and interact with the post.

- Like, boost, quote, or reply to the post to feature your activity here.