Ultimate self-hosted automation with Platypush

Get started with Platypush to automate your smart home and beyond

In the last few years we have experienced a terrific spike of products and solutions targeting home automation and automation in general. After a couple of years of hype around IoT, assistants and home automation the market is slowly cooling down, and a few solutions are emerging out of the primordial oh-look-at-this-shiny-new-toy chaos.

There are however a couple of issues I’ve still got with most of the available solutions that led me to invest more time in building Platypush.

The rationale behind a self-hosted, open-source and modular automation service

First, one class of solutions for home automation is provided by large for-profit companies. Such solutions, like the Google Home platform and Alexa, are intuitive to set up and use, but they’re quite rigid in the possibility of customization — meaning that you’ve only got the integrations that Google or Amazon provide you with, based on the partnerships they decide to build, you’ve only got to use the user interfaces they provide you with on the devices they decide to support, you can’t install the platform wherever you like or interact with it in any way outside of what’s provided by the company, and such decisions also subject to change over time. Additionally, a truly connected house will generate a lot of data about you (much more than what you generate by browsing the web), and I feel a bit uncomfortable sharing that data with people who don’t seem to bother to share it with anyone else without my consent. Plus, the integrations provided by such platforms often break (see this, this and this, and they’re only a few examples) . It can be quite annoying if all of a sudden you can no longer control your expensive lights or cameras from your expensive assistant or smart screen, and the code for controlling them runs on somebody else’s cloud, and that somebody else just informs you that “they’re working on that”.

Another issue with home automation solutions so far is fragmentation. You’ll easily have to buy 3–4 different bridges, devices or adapters if you want to control your smart lights, smart buttons, switches or a living room media centre. Compatibility with existing remotes is also most of the times neglected. Not to mention the number of apps you’ll have to download — each provided by a different author, each eating up space on your phone, and don’t expect that many options to make them communicate with each other. Such fragmentation has been indeed one of the core issues I’ve tried to tackle when building platypush, as I aimed to have only one central entry point, one control panel, one dashboard, one protocol to control everything, while still providing all the needed flexibility in terms of supported communication backends and APIs. The need for multiple bridges for home automation also goes away once you provide modules to manage Bluetooth, Zigbee and Z-Wave, and all you need to interact with your devices is a physical adapter connected to any kind of computer. The core idea is that, if there is a Python library or API to do what you want to do (or at least something that can be wrapped in a simple Python logic), then there should also be a plugin to effortlessly integrate what you want to do into the ecosystem you already have. It’s similar to the solution that Google has later tried to provide with the device custom actions, even though the latter is limited to assistant interactions so far, and it’s currently subject to change because of the deprecation of the assistant library.

Another class of solutions for such problems come from open source products like Home Assistant. While Platypush and the Home Assistant share quite a few things — they both started being developed around the same time, both started out as a bunch of scripts that we developers used to control our own things, and we eventually ended up gluing together in a larger platform for general use, both are open source and developed in Python, and both aim to bring the logic for controlling your house inside your house instead of running it on somebody else’s cloud — there are a couple of reasons why I eventually decided to keep working on my own platform instead of converging my efforts into Home Assistant.

First, Home Assistant has a strong Raspberry Pi-first approach. The suggested way to get started is to flash the Hass.io image to an SD card and boot up your RPi. While my solution is heavily tested within the Raspberry Pi ecosystem as well, it aims to run on any device that comes with a CPU and a Python interpreter. You can easily install it and run it on any x86/x86_64 architecture too if you want to handle your automation logic on an old laptop, a desktop or any Intel-based micro-computer. You can easily run it on other single-board computers, such as the Asus Tinkerboard, any BananaPi or Odroid device. You can even run it on Android if you have an implementation of the Python interpreter installed. You can even run a stripped-down version on a microcontroller that runs MicroPython. While Home Assistant has tackled its growth and increasing complexity by narrowing down the devices it supports, and providing pre-compiled OS and Docker images to reduce the complex process of getting it to run on bare-metal, I've tried to keep Platypush as modular, lightweight and easy to setup and run as possible. You can run it in a Python virtual environment, in a Docker container, in a virtual machine or KVM — if you can name it, you can probably do it already. I have even managed to run it on an old Nokia N900, both on the original Maemo and Arch Linux, and on several Android smartphones and tablets. And, most of all, it has a very small memory and CPU footprint. Running hotword detection, assistant, web panel, camera, lights, music control and a few sensors on a small Raspberry Zero is guaranteed to take not more than 5-10% of CPU load and just a few MBs of RAM.

The flexibility of Platypush comes however a slightly steeper learning curve, but it rewards the user with much more

room for customization. You are expected to install it via pip or

the git repo, install the dependencies based on the plugins you

want (although managing per-plugin dependencies is quite easy via pip), and manually create or edit a configuration

file. But it provides much, much more flexibility. It can listen for messages on MQTT, HTTP (but you don’t have to run

the webserver if you don’t want to), websocket, TCP socket, Redis, Kafka, Pushbullet — you name it, it has probably got

it already. It allows you to create powerful procedures and event hooks written either in an intuitive YAML-based language

or as drop-in Python scripts. Its original mission is to simplify home automation, but it doesn't stop there: you

can use it to send text messages, read notifications from Android devices, control robots, turn your old box into a fully

capable media center (with support for torrents, YouTube, Kodi, Plex, Chromecast, vlc, subtitles and many other players

and formats)

or a music center (with support for local collections, Spotify, SoundCloud, radios etc.), stream audio from your house,

play raw or sampled sounds from a MIDI interface, monitor access to the file system on a server, run custom actions when

some NFC tag is detected by your reader, read and extract content from RSS feeds and send it to your Kindle, read and

write data to a remote database, send metrics to Grafana, create a custom voice assistant, run and train machine

learning models, and so on — basically, you can do anything that comes

with one of the hundreds of supported plugin.

Another issue I’ve tried to tackle is the developer and power user experience. I wanted to make it easy to use the automation platform as a library or a general-purpose API, so you can easily invoke a custom logic to turn on the lights or control the music in any of your custom scripts through something as simple as a get_plugin('light.hue').on() call, or by sending a simple JSON request over whichever API, queue or socket communication you have set up. I also wanted to make it easy to create complex custom actions (something like “when I get home, turn on the lights if it’s already dark, turn on the fan if it’s hot, the thermostat if it’s cold, the dehumidifier if it’s too humid, and play the music you were listening on your phone”) through native pre-configured action — similar to what is offered by Node-Red but with more flexibility and ability to access the local context, and less agnostic when it comes to plugins, similar to what is offered by IFTTT and Microsoft Flow but running in your own network instead of somebody else’s cloud, similar to the flexibility offered by apps like Tasker and AutoApps, but not limited to your Android device. My goal was also to build a platform that aims to be completely agnostic about how the messages are exchanged and which specific logic is contained in the plugins. As long as you’ve got plugins and backends that implement a certain small set of elements, then you can plug them in.

Another issue I’ve tried to tackle is the developer and power user experience. I wanted to make it easy to use the

automation platform as a library or a general-purpose API, so you can easily invoke a custom logic to turn on the lights

or control the music in any of your custom scripts through something as simple as a get_plugin('light.hue').on() call,

or by sending a simple JSON request over whichever API, queue or socket communication you have set up. I also wanted to

make it easy to create complex custom actions (something like “when I get home, turn on the lights if it’s already dark,

turn on the fan if it’s hot, the thermostat if it’s cold, the dehumidifier if it’s too humid, and play the music I was

listening on my phone”) through native pre-configured action — similar to what is offered by Node-Red but with

more flexibility and ability to access the local context, and without delegating too much of the integrations logic to

other blocks; similar to what is offered by IFTTT and Microsoft Flow, but running in your own network instead of somebody

else’s cloud; similar to the flexibility offered by apps like Tasker and AutoApps, but not limited to your Android

device.

Finally, extensibility was also a key factor I had in mind. I know how fundamental the contribution of other developers is if you want your platform to support as many things as possible out there. One of my goals has been to provide developers with the possibility of building a simple plugin in around 15 lines of Python code, and a UI integration in around 40 lines of HTML+Javascript code thanks to a consistent API that takes care of all the boilerplate.

Let’s briefly analyze how platypush is designed to better grasp how it can provide more features and flexibility than most of the platforms I’ve seen so far.

The building blocks

There are a couple of connected elements at the foundations of platypush that allow users to build whichever solution they like:

- Plugins: they are arguably the most important component of the platform. A plugin is a Python class that handles a

type of device or service (like lights, music, calendar etc.), and it exposes a set of methods that enable you to

programmatically invoke actions over those devices and services (like turn on, play, get upcoming events etc.).

All plugins implement an abstract

Pluginclass and their configuration is completely transparent to the constructor arguments of the plugin itself - i.e. you can look at the constructor itself in the source code to understand which arguments a plugin takes, and you can fill those variables directly in yourconfig.yaml. Example:

light.hue:

bridge: 192.168.1.100

groups:

- Living Room

- Bathroom

-

Backends: they are threads that run in the background and listen for something to happen (an HTTP request, a websocket or message queue message, a voice assistant interaction, a new played song or movie…). When it happens, they will trigger events, and other parts of the platform can asynchronously react to those events.

-

Messages: a message in platypush is just a simple JSON string that comes with a type and a set of arguments. You have three main types of messages on the platform:

- Requests: they are messages used to require a certain plugin action to be executed. The format of the action name

is quite simple (

plugin_name.method_name), and you can, of course, pass extra arguments to the action. Actions are mapped one-to-one to methods in the associated plugin class through the@actionannotation. It means that a request object is transparent to the organization of the plugin, and such a paradigm enables the user to build flexible JSON-RPC-like APIs. For example, theonaction of thelight.hueplugin accepts lights and groups as optional parameters. It means that you can easily build a request like this and deliver it to platypush through whichever backend you prefer (note thatargsare optional in this case):

- Requests: they are messages used to require a certain plugin action to be executed. The format of the action name

is quite simple (

{

"type":"request",

"action":"light.hue.on",

"args": {

"groups": [

"Living Room",

"Bathroom"

]

}

}

If you have the HTTP backend running, for example, you can easily dispatch such a request to it through the available JSON-RPC execute endpoint.

First create a user through the web panel at http://localhost:8008, then generate a token for the user to authenticate

the API calls - you can easily generate a token from the web panel itself, Settings -> Generate token.

Store the token under an environment variable (e.g. $PP_TOKEN) and use it in your calls over the Authorization: Bearer

header:

curl -XPOST -H 'Content-Type: application/json' \

-H "Authorization: Bearer $PP_TOKEN" \

-d '{"type":"request", "action":"light.hue.on", "args": {"groups": ["Living Room", "Bedroom"]}}' \

http://localhost:8008/execute

# HTTPie example

echo '{

"type":"request",

"action":"light.hue.on",

"args": {

"groups": ["Living Room", "Bedroom"]

}

}' | http http://localhost:8008/execute "Authorization: Bearer $PP_TOKEN"

And you can also easily send requests programmatically through your own Python scripts, basically using Platypush as a library in other scripts or projects:

from platypush.context import get_plugin

response = get_plugin('light.hue').on(groups=['Living Room', 'Bathroom'])

print(response)

- Responses: they are messages that contain the

outputanderrorsresulting from the execution of a request. If you send this request for example:

{

"type":"request",

"action":"light.hue.get_lights"

}

You'll get back something like this:

{

"id": "6e5383cee53e8330afc5dfb9bde12a25",

"type": "response",

"target": "http",

"origin": "your_server_name",

"_timestamp": 1564154465.715452,

"response": {

"output": {

"1": {

"state": {

"on": false,

"bri": 254,

"hue": 14916,

"sat": 142,

"effect": "none",

"xy": [

0.4584,

0.41

],

"ct": 366,

"alert": "lselect",

"colormode": "xy",

"mode": "homeautomation",

"reachable": true

},

"type": "Extended color light",

"name": "Living Room Ceiling Right",

"manufacturername": "Philips",

"productname": "Hue color lamp"

},

"errors": [

]

}

}

}

If you send a request over a synchronous backend (e.g. the HTTP or TCP backend) then you can expect the response to be delivered back on the same channel. If you send it over an asynchronous backend (e.g. a message queue or a websocket) then the response will be sent asynchronously, creating a dedicated queue or channel if required.

- **Events**: they are messages that can be triggered by backends (and also some plugins) when a certain condition is

verified. They can be delivered to connected web clients via websocket, or you can build your own custom logic on them

through pre-configured *event hooks*. Event hooks are similar to applets on IFTTT or profiles in Tasker — they execute a

certain action (or set of actions) when a certain event occurs. For example, if you enable the Google Assistant backend

and some speech is detected then a `SpeechRecognizedEvent` will be fired. You can create an event hook like this in your

configuration file to execute custom actions when a certain phrase is detected (note that regular expressions and

extractions of parts from the phrase, at least to some extent, are also supported):

# Play a specific radio on the mpd (or mopidy) plugin

event.hook.PlayRadioParadiseAssistantCommand:

if:

type: platypush.message.event.assistant.SpeechRecognizedEvent

phrase: "play (the)? radio paradise"

then:

action: music.mpd.play

args:

resource: tunein:station:s13606

# Search and play a song by an artist. Note the use of ${} to identify

# parts of the target attribute that should be preprocessed by the hook

event.hook.SearchSongVoiceCommand:

if:

type: platypush.message.event.assistant.SpeechRecognizedEvent

phrase: "play ${title} by ${artist}"

then:

- action: music.mpd.clear # Clear the current playlist

- action: music.mpd.search

args:

artist: ${artist} # Note the special map variable "context" to access data from the current context

title: ${title}

# music.mpd.search will return a list of results under "output". context['output'] will

# therefore always contain the output of the last request. We can then get the first

# result and play it.

- action: music.mpd.play

args:

resource: ${context['output'][0]['file']}

You may have noticed that you can wrap Python expression by ${} . You can also access context data through the special

context variable. As we saw in the example above, that allows you to easily access the output and errors of the latest

executed command (but also the event that triggered the hook, through context.get('event')). If the previous command

returned a key-value map, or if we extracted named-value pairs from one of the event arguments, then you can also omit

the context and access those directly by name — in the example above you can access the artist either through

context.get('artist') or simply artist, for example.

And you can also define event hooks directly in Python by just creating a script under ~/.config/platypush/scripts:

from platypush.context import get_plugin

from platypush.event.hook import hook

from platypush.message.event.assistant import SpeechRecognizedEvent

@hook(SpeechRecognizedEvent, phrase='play (the)? radio paradise')

def play_radio_hook(event, **context):

mpd = get_plugin('music.mpd')

mpd.play(resource='tunein:station:s13606')

- Procedures: the last fundamental element of platypush are procedures. They are groups of actions that can embed more complex logic, like conditions and loops. They can also embed small snippets of Python logic to access the context variables or evaluate expressions, as we have seen in the event hook example. For example, this procedure can execute some custom code when you get home that queries a luminosity and a temperature sensor connected over USB interface (e.g. Arduino), and turns on your Hue lights if it’s below a certain threshold, says a welcome message over the text-to-speech plugin, switches on a fan connected over a TPLink smart plug if the temperature is above a certain threshold, and plays your favourite Spotify playlist through mopidy:

# Note: procedures can be synchronous (`procedure.sync` prefix) or asynchronous

# (`procedure.async` prefix). In a synchronous procedure the logic will wait for

# each action to be completed before proceeding with the next - useful if you

# want to link actions together, letting each action access the response of the

# previous one(s). An asynchronous procedure will execute instead all the actions

# in parallel. Useful if you want to execute a set of actions independent from

# each other, but be careful not to stack too many of them - each action will be

# executed in a new thread.

procedure.sync.at_home:

- action: serial.get_measurement

# Your device should return a JSON over the serial interface structured like:

# {"luminosity":45, "temperature":25}

# Note that you can either access the full output of the previous command through

# the `output` context variable, as we saw in the event hook example, or, if the

# output is a JSON-like object, you can access individual attributes of it

# directly through the context. It is indeed usually more handy to access individual

# attributes like this: the `output` context variable will be overwritten by the

# next response, while the individual attributes of a response will remain until

# another response overwrites them (they're similar to local variables)

- if ${luminosity < 30}:

- action: light.hue.on

- if ${temperature > 25}:

- action: switch.tplink.on

args:

device: Fan

- action: tts.google.say

args:

text: Welcome home

- action: music.mpd.play

args:

resource: spotify:user:1166720951:playlist:0WGSjpN497Ht2wYl0YTjvz

Again, you can also define procedures purely in Python by dropping a script under ~/.config/platypush/scripts - just

make sure that those procedures are also imported in ~/.config/platypush/scripts/__init__.py so they are visible to

the main application:

# ~/.config/platypush/scripts/at_home.py

from platypush.procedure import procedure

from platypush.utils import run

@procedure

def at_home(**context):

sensors = run('serial.get_measurement')

if sensors['luminosity'] < 30:

run('light.hue.on')

if sensors['temperature'] > 25:

run('switch.tplink.on', device='Fan')

run('tts.google.say', text='Welcome home')

run('music.mpd.play', resource='spotify:user:1166720951:playlist:0WGSjpN497Ht2wYl0YTjvz')

# ~/.config/platypush/scripts/__init__.py

from scripts.at_home import at_home

In both cases, you can call the procedure either from an event hook or directly through API:

# cURL example

curl -XPOST -H 'Content-Type: application/json' \

-H "Authorization: Bearer $PP_TOKEN" \

-d '{"type":"request", "action":"procedure.at_home"}' \

http://localhost:8008/execute

You can also create a Tasker profile with a WiFi-connected or an AutoLocation trigger that fires when you enter your

home area, and that profile can send the JSON request to your platypush device over e.g. HTTP /execute endpoint or

MQTT — and you’ve got your custom welcome when you arrive home!

Now that you’ve got a basic idea of what’s possible with Platypush and which are its main components, it’s time to get the hands dirty, getting it installed and configure your own plugins and rules.

Installation - Quickstart

First of all, Platypush relies on Redis as in-memory storage and internal message queue for delivering messages, so that's the only important dependency to install:

# Installation on Debian and derived distros

[sudo] apt install redis-server

# Installation on Arch and derived distros

[sudo] pacman -S redis

# Enable and start the service

[sudo] systemctl enable redis.service

[sudo] systemctl start redis.service

You can then install Platypush through pip:

[sudo] pip install platypush

Or through the Gitlab repo:

git clone https://git.platypush.tech/platypush/platypush

cd platypush

python setup.py build

[sudo] python setup.py install

In both the cases, however, this will install only the dependencies for the core platform - that, by design, is kept very small and relies on backends and plugins to actually do things.

The dependencies for each integration are reported in the documentation of that integration itself ( see official documentation), and there are mainly four ways to install them:

- Through

pipextras: this is probably the most immediate way, although (for now) it requires you to take a look at theextras_requiresection of thesetup.pyto see what's the name of the extra required by your plugin/backend. For example, a common use case usually includes enabling the HTTP backend (for the/executeendpoint and for the UI), and perhaps you may want to enable a plugin for managing your lights (e.g.light.hue), your music server (e.g.music.mpd) and your Chromecasts (e.g.media.chromecast). If that's the case, then you can just get the name of the extras required by these integrations fromsetup.pyand install them via pip:

# If you are installing Platypush directly from pip

[sudo] pip install 'platypush[http,hue,mpd,chromecast]'

# If you are installing Platypush from sources

cd /your/path/to/platypush

[sudo] pip install '.[http,hue,mpd,chromecast]'

- From

requirements.txt. The file reports the core required dependencies as uncommented lines, and optional dependencies as commented lines. Uncomment the dependencies you need for your integrations and then from the Platypush source directory type:

cd /your/path/to/platypush

[sudo] pip install -r requirements.txt

- Manually. The official documentation reports the dependencies required by each integration and the commands to

install them, so an option would be to simply paste those commands. Another way to check the dependencies is by

inspecting the

__doc__item of a plugin or backend through the Python interpreter itself:

>>> from platypush.context import get_plugin

>>> plugin = get_plugin('light.hue')

>>> print(plugin.__doc__)

Philips Hue lights plugin.

Requires:

* **phue** (``pip install phue``)

- Through your OS package manager. This may actually be the best option if you want to install Platypush globally and

not in a virtual environment or in your user dir, as it helps keeping your system Python modules libraries clean

without too much pollution from

pipmodules and it would let your package manager take care of installing updates when they are available or when you upgrade your version of Python. However, you may have to map the dependencies provided by each integration to the corresponding package name on Debian/Ubuntu/Arch/CentOS etc.

Configuration

Once we have our dependencies installed, it’s time to configure the plugin. For example, if you want to manage your Hue

lights and your music server, create a ~/.config/platypush/config.yaml configuration file (it's always advised to run

Platypush as a non-privileged user) with a configuration that looks like this:

# Enable the web service and UI

backend.http:

enabled: True

light.hue:

bridge: 192.168.1.10 # IP address of your Hue bridge

groups: # Default groups that you want to control

- Living Room

# Enable also the light.hue backend to get events when the status

# of the lights changes

backend.light.hue:

poll_seconds: 20 # Check every 20 seconds

music.mpd:

host: localhost

# Enable either backend.music.mpd, or backend.music.mopidy if you

# use Mopidy instead of MPD, to receive events when the playback state

# or the played track change

backend.music.mpd:

poll_seconds: 10 # Check every 10 seconds

A few notes:

-

The list of events triggered by each backend is also available in the documentation of those backends, and you can write your own hooks (either in YAML inside of

config.yamlor as Python drop-in scripts) to capture them and execute custom logic - for instance,backend.light.huecan triggerplatypush.message.event.light.LightStatusChangeEvent. -

By convention, plugins are identified by the lowercase name of their class without the

Pluginsuffix (e.g.light.hue) while backends are identified by the lowercase name of their class without theBackendsuffix. -

The configuration of a plugin or backend expects exactly the parameters of the constructor of its class, so it's very easy to look either at the source code or the documentation and get the parameters required by the configuration and their default values.

-

By default, the HTTP backend will run the web service directly through a Flask wrapper. If you are planning to run the service on a machine with more traffic or in production mode, then it's advised to use a uwsgi+nginx wrapper - the official documentation explains how to do that.

-

If the plugin or the backend doesn't require parameters, or if you want to keep the default values for the parameters, then its configuration can simply contain

enabled: True.

You can now add your hooks and procedures either directly inside the config.yaml (if they are in YAML format) or in

~/.config/platypush/scripts (if they are in Python format). Also, the config.yaml can easily get messy, especially

if you add many integrations, hooks and procedures, so you can split it on multiple files and use the include

directive to include those external files (if relative paths are used then their reference base directory will be

~/.config/platypush):

include:

- integrations/lights.yaml

- integrations/music.yaml

- integrations/media.yaml

- hooks/home.yaml

- hooks/media.yaml

# - ...

Finally, launch the service from the command line:

$ platypush # If the executable is installed in your PATH

$ python -m platypush # If you want to run it from the sources folder

You can also create a systemd service for it and have it to automatically start. Copy something like this

to ~/.config/systemd/user/platypush.service:

[Unit]

Description=Universal command executor and automation platform

After=network.target redis.service

[Service]

ExecStart=/usr/bin/platypush

Restart=always

RestartSec=5

[Install]

WantedBy=default.target

Then:

systemctl --user start platypush # Will start the service

systemctl --user enable platypush # Will spawn it at startup

If everything went smooth and you have e.g. enabled the Hue plugin, you will see a message like this on your logs/stdout:

Bridge registration error: The link button has not been pressed in the last 30 seconds.

As you may have guessed, you’ll need to press the link button on your Hue bridge to complete the pairing. After that, you’re ready to go, and you should see traces with information about your bridge popping up in your log file.

If you enabled the HTTP backend then you may want to point your browser to http://localhost:8008 to create a new user.

Then you can test the HTTP backend by sending e.g. a get_lights command:

curl -XPOST \

-H 'Content-Type: application/json' \

-H "Authorization: Bearer $PP_TOKEN" \

-d '{"type":"request", "action":"light.hue.get_lights"}' \

http://localhost:8008/execute

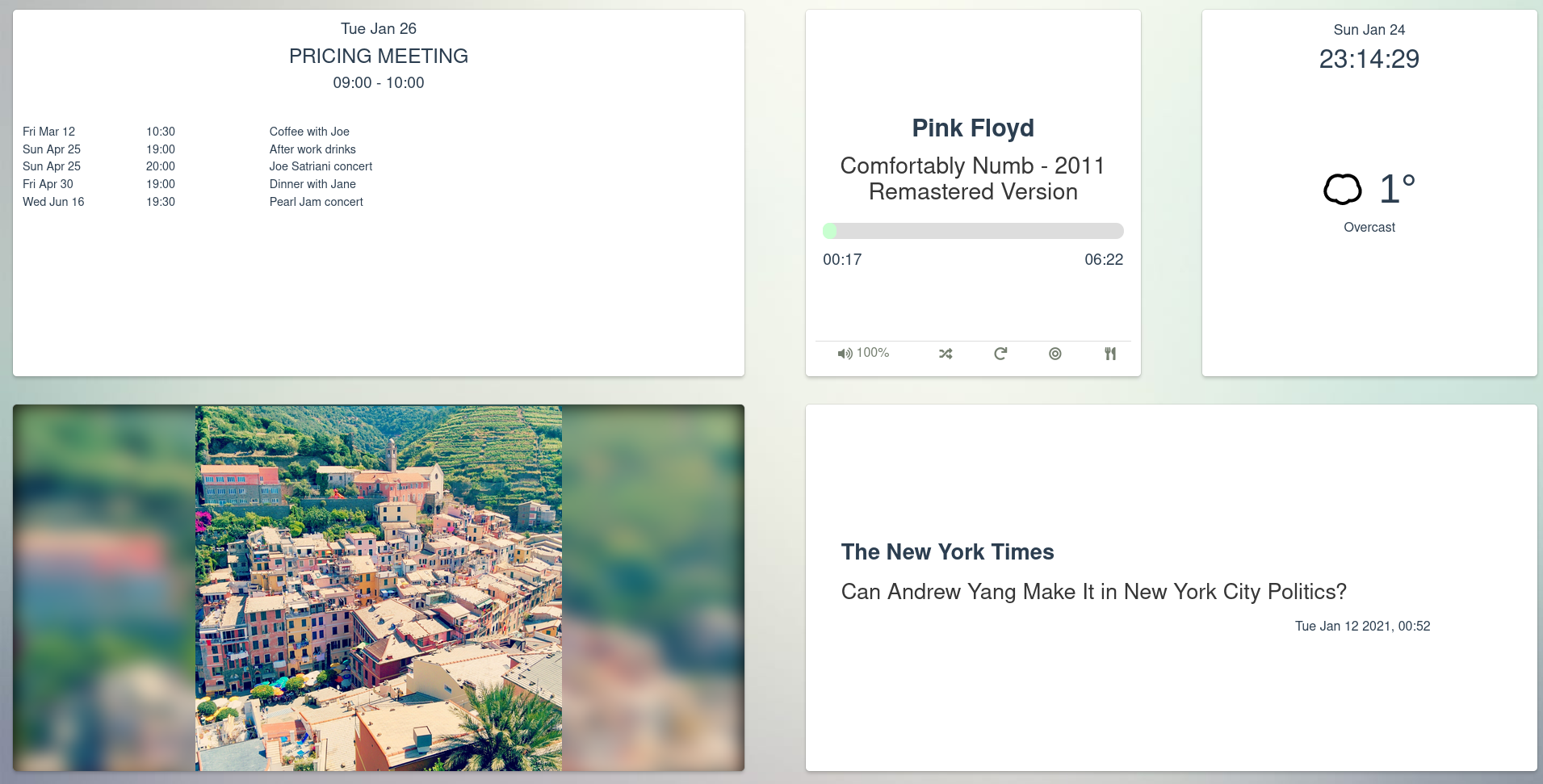

You may also notice that a panel is accessible on http://localhost:8008 upon login that contains the UI for each

integration that provides it. Example for the light.hue plugin:

Now you’ve got all the basic notions to start playing with Platypush on your own! Remember that all you need to know to initialize a specific plugin, interact with it and which dependencies it requires is in the official documentation. Stay tuned for the next articles that will show you how to do more with platypush — configuring your voice assistant, control your media, manage your music collection, read data from sensors or NFC tags, control motors, enable multi-room music control, implement a security system for your house with real-time video and audio stream, control your switches and smart devices, turn your old TV remote into a universal remote to control anything attached to platypush, and much more.

Reactions

How to interact with this page

Webmentions

To interact via Webmentions, send an activity that references this URL from a platform that supports Webmentions, such as Lemmy, WordPress with Webmention plugins, or any IndieWeb-compatible site.

ActivityPub

- Follow @blog@platypush.tech on your ActivityPub platform (e.g. Mastodon, Misskey, Pleroma, Lemmy).

- Mention @blog@platypush.tech in a post to feature on the Guestbook.

- Search for this URL on your instance to find and interact with the post.

- Like, boost, quote, or reply to the post to feature your activity here.

I have been a quite strong advocate of Webmentions for a long time.

The idea is simple and powerful, and very consistent with the decentralized POSSE approach to content syndication.

Suppose that Alice finds an interesting article on Bob's website, at https://bob.com/article.

She writes a comment about it on her own website, at https://alice.com/comment.

If both Alice's and Bob's websites support Webmentions, then their websites will both advertise an e.g. POST /webmentions endpoint.

When Alice publishes her comment, her website will send a Webmention to Bob's website, with the source URL (https://alice.com/comment) and the target URL (https://bob.com/article).

Bob's website will receive the Webmention, verify that the source URL actually mentions the target URL, and then display the comment on the article page.

No 3rd-party commenting system. No intermediate services. No social media login buttons. No ad-hoc comment storage and moderation solutions. Just a simple, decentralized, peer-to-peer mechanism based on existing Web standards.

This is an alternative (and complementary) approach to federation mechanisms like ActivityPub, which are very powerful but also quite complex to implement, as implementations must deal with concepts such as actors, relays, followers, inboxes, outboxes, and so on.

It is purely peer-to-peer, based on existing Web infrastructure, and with no intermediate actors or services.

Moreover, thanks to Microformats, Webmentions can be used to share any kind of content, not just comments: likes, reactions, RSVPs, media, locations, events, and so on.

However, while the concept is simple, implementing Webmentions support from scratch can be a bit cumbersome, especially if you want to do it right and support all the semantic elements.

I have thus proceeded to implement a simple Python library (but more bindings are on the backlog) that can be easily integrated into any website, and that takes care of all the details of the Webmentions protocol implementation. You only have to worry about writing good semantic HTML, and rendering Webmention objects in your pages.

Quick start

If you use FastAPI or Flask, serve your website as static files and you're ok to use an SQLAlchemy engine to store Webmentions, you can get started in a few lines of code.

pip install "webmentions[db,file,fastapi]"

# For Flask bindings

pip install "webmentions[db,file,flask]"

Base implementation:

import os

from webmentions import WebmentionsHandler

from webmentions.storage.adapters.db import init_db_storage

from webmentions.server.adapters.fastapi import bind_webmentions

from webmentions.storage.adapters.file import FileSystemMonitor

# This should match the public URL of your website

base_url = "https://example.com"

# The directory that serves your static articles/posts.

# HTML, Markdown and plain text are supported

static_dir = "/srv/html/articles"

# A function that takes a path to a created/modified/deleted text/* file

# and maps it to a URL on the Web server to be used as the Webmention source

def path_to_url(path: str) -> str:

# Convert path (absolute) to a path relative to static_dir

# and drop the extension.

# For example, /srv/http/articles/2022/01/01/article.md

# becomes /2022/01/01/article

path = os.path.relpath(path, static_dir).rsplit(".", 1)[0].lstrip("/")

# Convert the path to a URL on the Web server

# For example, /2022/01/01/article

# becomes https://example.com/articles/2022/01/01/article

return f"{base_url.rstrip('/')}/articles/{path}"

##### For FastAPI

from fastapi import FastAPI

from webmentions.server.adapters.fastapi import bind_webmentions

app = FastAPI()

##### For Flask

from flask import Flask

from webmentions.server.adapters.flask import bind_webmentions

app = Flask(__name__)

# ...Initialize your Web app as usual...

# Create a Webmention handler

handler = WebmentionsHandler(

storage=init_db_storage(engine="sqlite:////tmp/webmentions.db"),

base_url=base_url,

)

# Bind Webmentions to your app

bind_webmentions(app, handler)

# Create and start the filesystem monitor before running your app

with FileSystemMonitor(

root_dir=static_dir,

handler=handler,

file_to_url_mapper=path_to_url,

) as monitor:

app.run(...)

This will:

- Register a

POST /webmentionsendpoint to receive Webmentions - Advertise the Webmentions endpoint in every

text/*response provided by the server - Expose a

GET /webmentionsendpoint to list Webmentions (takesresourceURL anddirection(inorout) query parameters) - Store Webmentions in a database (using SQLAlchemy)

- Monitor

static_dirfor changes to HTML or text files, automatically parse them to extract Webmention targets and sources, and send Webmentions when new targets are found

Generic Web framework setup

If you don't use FastAPI or Flask, or you want a higher degree of customization, you can still use the library by implementing and advertising your own Webmentions endpoint, which in turn will simply call WebmentionsHandler.process_incoming_webmention.

You will also have advertise the Webmentions endpoint in your responses, either through:

- A

Linkheader (with a value in the format<https://example.com/webmentions>; rel="webmention") - A

<link>or<a>element in the HTML head or body (in the format<link rel="webmention" href="https://example.com/webmentions">)

An example is provided in the documentation.

Generic storage setup

If you don't want to use SQLAlchemy, you can implement your own storage by implementing the WebmentionsStorage interface (namely the store_webmention, retrieve_webmentions, and delete_webmention methods), then pass that to the WebmentionsHandler constructor.

An example is provided in the documentation.

Manual handling of outgoing Webmentions

The FileSystemMonitor approach is quite convenient if you serve your website (or a least the mentionable parts of it) as static files.

However, if you have a more dynamic website (with posts and comments stored on e.g. a database), or you want to have more control over when Webmentions are sent, you can also call the WebmentionsHandler.process_outgoing_webmentions method whenever a post or comment is published, updated or deleted, to trigger the sending of Webmentions to the referenced targets.

An example is provided in the documentation.

Subscribe to mention events

You may want to add your custom callbacks when a Webmention is sent or received - for example to send notifications to your users when some of their content is mentioned, or to keep track of the number of mentions sent by your pages, or to perform any automated moderation or filtering when mentions are processed etc.

This can be easily achieved by providing custom callback functions (on_mention_processed and on_mention_deleted) to the WebmentionsHandler constructor, and both take a single Webmention object as a parameter.

An example is provided in the documentation.

Filtering and moderation

This library is intentionally agnostic about filtering and moderation, but it provides you with the means to implement your own filtering and moderation logic through the on_mention_processed and on_mention_deleted callbacks.

By default all received Webmentions are stored with WebmentionStatus.CONFIRMED status.

This can be changed by setting the initial_mention_status parameter of the WebmentionsHandler constructor to WebmentionStatus.PENDING, which will cause all received Webmentions to be stored but not visible on the website until they are manually confirmed by an administrator.

You can then use the on_mention_processed callback to implement your own logic to either notify the administrator of new pending mentions, or to automatically confirm them based on some criteria.

A minimal example is provided in the documentation.

Make your pages mentionable

Without good semantic HTML, Webmentions will be quite minimal. They will still work, but they will probably be rendered simply as a source URL and a creation timestamp.

The Webmention specification is intentionally simple, in that the POST endpoint only expects a source URL and a target URL. The rest of the information about the mention (the author, the content, the type of mention, any attachments, and so on) is all derived from the source URL, by parsing the HTML of the source page and extracting the relevant Microformats.

While the Microformats2 specification is quite flexible and a work-in-progress, there are a few basic elements whose usage is recommended to make the most out of Webmentions.

A complete example with a semantic-aware HTML article is provided in the documentation.

Rendering mentions on your pages

Finally, the last step is to render the received Webmentions on your pages.

A

WebmentionsHandler.render_webmentions

helper is provided to automatically generate a safe pre-rendered and reasonably

styled (but customizable through CSS variables) Markup object, which you can

then render in your templates. Example:

from fastapi import FastAPI

from fastapi.templating import Jinja2Templates

from webmentions import WebmentionsHandler

from webmentions.server.adapters.fastapi import bind_webmentions

base_url = "https://example.com"

app = FastAPI()

handler = WebmentionsHandler(...)

bind_webmentions(app, handler)

# ...

@app.get("/articles/{article_id}")

def article(request, article_id: int):

templates = Jinja2Templates(directory="templates")

mentions = handler.retrieve_webmentions(

f"{base_url}/articles/{article_id}",

WebmentionDirection.IN,

)

rendered_mentions = handler.render_webmentions(mentions)

return templates.TemplateResponse(

"article.html",

{

"request": request,

"article_id": article_id,

"mentions": rendered_mentions,

},

)

Where article.html is a Jinja template that looks like this:

<!doctype html>

<html>

<head>

<title>Example article</title>

</head>

<body>

<main>

<article class="h-entry">

<h1 class="p-name">Example article</h1>

<time class="dt-published" datetime="2026-02-07T21:03:00+01:00">

Feb 7, 2026

</time>

<div class="e-content">

<p>Your article content goes here.</p>

</div>

</article>

{{ mentions }}

</div>

</body>

</html>

More details are provided in the documentation.

For more customizing rendering, a reference Jinja template is also provided in the documentation.

Current implementations

So far the library is used in madblog, a minimal zero-database Markdown-based blogging engine I maintain, which powers both my personal blog and the Platypush blog.

You can see some Webmentions in action on some of my blog posts.

And, if you include a link to any article of mine in your website, and your website supports Webmentions (for example there is a Wordpress plugin), you should see the mention appear in the comments of the article page.

Links